Simulation

Given VAR coefficient and VHAR coefficient each,

-

sim_var(num_sim, num_burn, var_coef, var_lag, sig_error, init)generates VAR process -

sim_vhar(num_sim, num_burn, vhar_coef, sig_error, init)generates VHAR process

We use coefficient matrix estimated by VAR(5) in introduction vignette.

Consider

coef(ex_fit)

#> GVZCLS OVXCLS EVZCLS VXFXICLS

#> GVZCLS_1 0.93302 -0.02332 -0.007712 -0.03853

#> OVXCLS_1 0.05429 1.00399 0.009806 0.01062

#> EVZCLS_1 0.06794 -0.13900 0.983825 0.07783

#> VXFXICLS_1 -0.03399 0.03404 0.020719 0.93350

#> GVZCLS_2 -0.07831 0.08753 0.019302 0.08939

#> OVXCLS_2 -0.04770 0.01480 0.003888 0.04392

#> EVZCLS_2 0.08082 0.26704 -0.110017 -0.07163

#> VXFXICLS_2 0.05465 -0.12154 -0.040349 0.04012

#> GVZCLS_3 0.04332 -0.02459 -0.011041 -0.02556

#> OVXCLS_3 -0.00594 -0.09550 0.006638 -0.04981

#> EVZCLS_3 -0.02952 -0.04926 0.091056 0.01204

#> VXFXICLS_3 -0.05876 -0.05995 0.003803 -0.02027

#> GVZCLS_4 -0.00845 -0.04490 0.005415 -0.00817

#> OVXCLS_4 0.01070 -0.00383 -0.022806 -0.05557

#> EVZCLS_4 -0.01971 -0.02008 -0.016535 0.08229

#> VXFXICLS_4 0.06139 0.14403 0.019780 -0.10271

#> GVZCLS_5 0.07301 0.01093 -0.010994 -0.01526

#> OVXCLS_5 -0.01658 0.07401 0.007035 0.04297

#> EVZCLS_5 -0.08794 -0.06189 0.021082 -0.02465

#> VXFXICLS_5 -0.01739 0.00169 0.000335 0.09384

#> const 0.57370 0.15256 0.132842 0.87785

ex_fit$covmat

#> GVZCLS OVXCLS EVZCLS VXFXICLS

#> GVZCLS 1.157 0.403 0.127 0.332

#> OVXCLS 0.403 1.740 0.115 0.438

#> EVZCLS 0.127 0.115 0.144 0.127

#> VXFXICLS 0.332 0.438 0.127 1.028Then

m <- ncol(ex_fit$coefficients)

# generate VAR(5)-----------------

y <- sim_var(

num_sim = 1500,

num_burn = 100,

var_coef = coef(ex_fit),

var_lag = 5L,

sig_error = ex_fit$covmat,

init = matrix(0L, nrow = 5L, ncol = m)

)

# colname: y1, y2, ...------------

colnames(y) <- paste0("y", 1:m)

head(y)

#> y1 y2 y3 y4

#> [1,] 10.4 23.7 7.93 25.6

#> [2,] 11.5 22.8 8.57 25.6

#> [3,] 17.1 24.3 8.66 29.2

#> [4,] 16.9 24.1 8.48 29.5

#> [5,] 16.5 23.2 8.29 29.2

#> [6,] 16.3 24.2 8.31 28.7

h <- 20

y_eval <- divide_ts(y, h)

y_train <- y_eval$train # train

y_test <- y_eval$test # testFitting Models

BVAR(5)

Minnesota prior

# hyper parameters---------------------------

y_sig <- apply(y_train, 2, sd) # sigma vector

y_lam <- .2 # lambda

y_delta <- rep(.2, m) # delta vector (0 vector since RV stationary)

eps <- 1e-04 # very small number

spec_bvar <- set_bvar(y_sig, y_lam, y_delta, eps)

# fit---------------------------------------

model_bvar <- bvar_minnesota(y_train, p = 5, bayes_spec = spec_bvar)BVHAR

BVHAR-S

spec_bvhar_v1 <- set_bvhar(y_sig, y_lam, y_delta, eps)

# fit---------------------------------------

model_bvhar_v1 <- bvhar_minnesota(y_train, bayes_spec = spec_bvhar_v1)BVHAR-L

# weights----------------------------------

y_day <- rep(.1, m)

y_week <- rep(.01, m)

y_month <- rep(.01, m)

# spec-------------------------------------

spec_bvhar_v2 <- set_weight_bvhar(

y_sig,

y_lam,

eps,

y_day,

y_week,

y_month

)

# fit--------------------------------------

model_bvhar_v2 <- bvhar_minnesota(y_train, bayes_spec = spec_bvhar_v2)Splitting

You can forecast using predict() method with above

objects. You should set the step of the forecasting using

n_ahead argument.

In addition, the result of this forecast will return another class

called predbvhar to use some methods,

- Plot:

autoplot.predbvhar() - Evaluation:

mse.predbvhar(),mae.predbvhar(),mape.predbvhar(),mase.predbvhar(),mrae.predbvhar(),relmae.predbvhar() - Relative error:

rmape.predbvhar(),rmase.predbvhar(),rmase.predbvhar(),rmsfe.predbvhar(),rmafe.predbvhar()

VAR

(pred_var <- predict(model_var, n_ahead = h))

#> y1 y2 y3 y4

#> [1,] 20.1 26.8 10.7 34.1

#> [2,] 19.8 26.3 10.6 33.6

#> [3,] 19.7 26.2 10.6 33.1

#> [4,] 19.7 26.0 10.6 32.7

#> [5,] 19.7 26.0 10.6 32.3

#> [6,] 19.6 26.0 10.6 32.0

#> [7,] 19.5 25.9 10.6 31.7

#> [8,] 19.5 25.8 10.5 31.4

#> [9,] 19.4 25.7 10.5 31.1

#> [10,] 19.4 25.6 10.5 30.9

#> [11,] 19.4 25.5 10.5 30.7

#> [12,] 19.3 25.4 10.4 30.4

#> [13,] 19.3 25.3 10.4 30.2

#> [14,] 19.2 25.2 10.4 30.0

#> [15,] 19.2 25.1 10.4 29.8

#> [16,] 19.2 25.0 10.3 29.7

#> [17,] 19.1 24.9 10.3 29.5

#> [18,] 19.1 24.8 10.3 29.4

#> [19,] 19.1 24.7 10.3 29.2

#> [20,] 19.1 24.5 10.2 29.1

class(pred_var)

#> [1] "predbvhar"

names(pred_var)

#> [1] "process" "forecast" "se" "lower" "upper"

#> [6] "lower_joint" "upper_joint" "y"The package provides the evaluation function

-

mse(predbvhar, test): MSE -

mape(predbvhar, test): MAPE

(mse_var <- mse(pred_var, y_test))

#> y1 y2 y3 y4

#> 4.924 6.479 0.301 1.749VHAR

(pred_vhar <- predict(model_vhar, n_ahead = h))

#> y1 y2 y3 y4

#> [1,] 19.9 26.5 10.6 34.0

#> [2,] 19.8 26.1 10.6 33.5

#> [3,] 19.7 25.9 10.6 33.1

#> [4,] 19.7 25.7 10.5 32.7

#> [5,] 19.6 25.6 10.5 32.3

#> [6,] 19.6 25.5 10.5 31.9

#> [7,] 19.6 25.4 10.4 31.5

#> [8,] 19.6 25.3 10.4 31.2

#> [9,] 19.6 25.2 10.4 30.9

#> [10,] 19.5 25.1 10.4 30.6

#> [11,] 19.5 25.1 10.4 30.3

#> [12,] 19.5 25.0 10.3 30.0

#> [13,] 19.5 25.0 10.3 29.8

#> [14,] 19.5 24.9 10.3 29.6

#> [15,] 19.5 24.9 10.3 29.4

#> [16,] 19.5 24.9 10.3 29.2

#> [17,] 19.5 24.9 10.3 29.0

#> [18,] 19.5 24.9 10.3 28.9

#> [19,] 19.5 24.8 10.3 28.7

#> [20,] 19.5 24.8 10.3 28.6MSE:

(mse_vhar <- mse(pred_vhar, y_test))

#> y1 y2 y3 y4

#> 4.002 6.965 0.246 2.235BVAR

(pred_bvar <- predict(model_bvar, n_ahead = h))

#> y1 y2 y3 y4

#> [1,] 2.14e+01 22.5 1.44e+01 29.2

#> [2,] 2.43e+01 20.0 2.33e+01 26.6

#> [3,] 3.08e+01 18.8 4.32e+01 25.4

#> [4,] 4.51e+01 18.5 8.79e+01 24.9

#> [5,] 7.61e+01 19.2 1.88e+02 24.9

#> [6,] 1.43e+02 21.8 4.11e+02 25.7

#> [7,] 2.88e+02 28.2 9.08e+02 27.9

#> [8,] 6.02e+02 42.6 2.02e+03 32.8

#> [9,] 1.28e+03 74.6 4.50e+03 43.9

#> [10,] 2.74e+03 145.4 1.00e+04 68.4

#> [11,] 5.90e+03 301.6 2.24e+04 122.4

#> [12,] 1.27e+04 646.0 5.00e+04 241.8

#> [13,] 2.74e+04 1405.8 1.12e+05 505.3

#> [14,] 5.91e+04 3082.0 2.49e+05 1087.3

#> [15,] 1.27e+05 6780.4 5.56e+05 2372.6

#> [16,] 2.74e+05 14941.9 1.24e+06 5211.7

#> [17,] 5.91e+05 32955.3 2.77e+06 11484.2

#> [18,] 1.27e+06 72719.7 6.19e+06 25343.9

#> [19,] 2.74e+06 160513.4 1.38e+07 55974.0

#> [20,] 5.88e+06 354380.2 3.09e+07 123677.7MSE:

(mse_bvar <- mse(pred_bvar, y_test))

#> y1 y2 y3 y4

#> 2.21e+12 7.90e+09 5.97e+13 9.61e+08BVHAR

VAR-type Minnesota

(pred_bvhar_v1 <- predict(model_bvhar_v1, n_ahead = h))

#> y1 y2 y3 y4

#> [1,] 20.1 21.3 10.34 27.2

#> [2,] 19.9 19.0 10.00 25.1

#> [3,] 19.8 18.0 9.69 24.5

#> [4,] 19.7 17.6 9.40 24.2

#> [5,] 19.6 17.3 9.13 24.1

#> [6,] 19.5 17.0 8.89 24.0

#> [7,] 19.4 16.8 8.67 23.9

#> [8,] 19.3 16.7 8.47 23.9

#> [9,] 19.2 16.6 8.29 23.8

#> [10,] 19.2 16.5 8.13 23.8

#> [11,] 19.1 16.4 7.98 23.8

#> [12,] 19.0 16.4 7.85 23.8

#> [13,] 19.0 16.3 7.74 23.7

#> [14,] 18.9 16.2 7.63 23.7

#> [15,] 18.9 16.2 7.53 23.7

#> [16,] 18.9 16.1 7.45 23.7

#> [17,] 18.8 16.1 7.37 23.7

#> [18,] 18.8 16.1 7.30 23.6

#> [19,] 18.8 16.0 7.24 23.6

#> [20,] 18.7 16.0 7.18 23.6MSE:

(mse_bvhar_v1 <- mse(pred_bvhar_v1, y_test))

#> y1 y2 y3 y4

#> 5.87 112.08 5.26 53.00VHAR-type Minnesota

(pred_bvhar_v2 <- predict(model_bvhar_v2, n_ahead = h))

#> y1 y2 y3 y4

#> [1,] 3.71e+01 1.88e+01 7.56e+00 2.52e+01

#> [2,] 2.27e+02 1.71e+01 7.04e+00 2.41e+01

#> [3,] 2.30e+03 2.02e+01 7.84e+00 2.50e+01

#> [4,] 2.51e+04 5.94e+01 1.81e+01 3.82e+01

#> [5,] 2.75e+05 4.91e+02 1.31e+02 1.84e+02

#> [6,] 3.02e+06 5.23e+03 1.37e+03 1.78e+03

#> [7,] 3.31e+07 5.72e+04 1.50e+04 1.93e+04

#> [8,] 3.63e+08 6.27e+05 1.64e+05 2.11e+05

#> [9,] 3.98e+09 6.87e+06 1.80e+06 2.31e+06

#> [10,] 4.36e+10 7.54e+07 1.97e+07 2.54e+07

#> [11,] 4.78e+11 8.26e+08 2.16e+08 2.78e+08

#> [12,] 5.24e+12 9.06e+09 2.37e+09 3.05e+09

#> [13,] 5.75e+13 9.94e+10 2.60e+10 3.35e+10

#> [14,] 6.31e+14 1.09e+12 2.85e+11 3.67e+11

#> [15,] 6.92e+15 1.20e+13 3.13e+12 4.02e+12

#> [16,] 7.58e+16 1.31e+14 3.43e+13 4.41e+13

#> [17,] 8.32e+17 1.44e+15 3.76e+14 4.84e+14

#> [18,] 9.12e+18 1.58e+16 4.12e+15 5.31e+15

#> [19,] 1.00e+20 1.73e+17 4.52e+16 5.82e+16

#> [20,] 1.10e+21 1.90e+18 4.96e+17 6.38e+17MSE:

(mse_bvhar_v2 <- mse(pred_bvhar_v2, y_test))

#> y1 y2 y3 y4

#> 6.06e+40 1.81e+35 1.24e+34 2.05e+34Compare

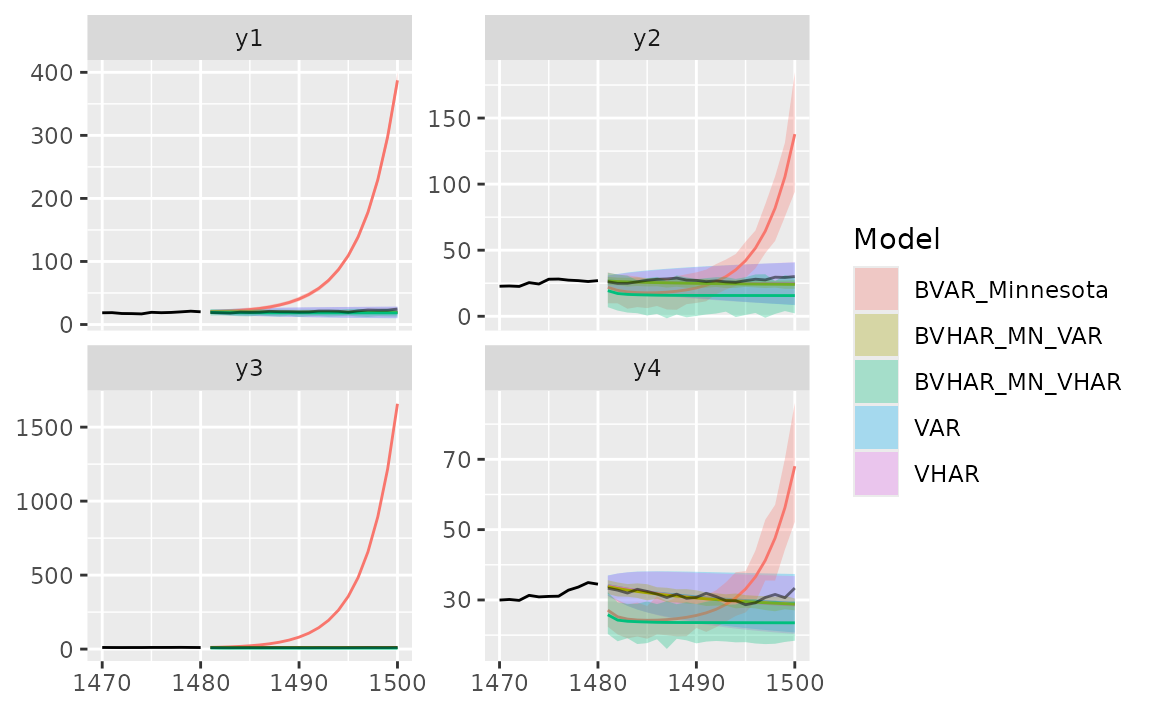

Region

autoplot(predbvhar) and

autolayer(predbvhar) draws the results of the

forecasting.

autoplot(pred_var, x_cut = 1470, ci_alpha = .7, type = "wrap") +

autolayer(pred_vhar, ci_alpha = .5) +

autolayer(pred_bvar, ci_alpha = .4) +

autolayer(pred_bvhar_v1, ci_alpha = .2) +

autolayer(pred_bvhar_v2, ci_alpha = .1) +

geom_eval(y_test, colour = "#000000", alpha = .5)

#> Warning: `label` cannot be a <ggplot2::element_blank> object.

#> `label` cannot be a <ggplot2::element_blank> object.

Error

Mean of MSE

list(

VAR = mse_var,

VHAR = mse_vhar,

BVAR = mse_bvar,

BVHAR1 = mse_bvhar_v1,

BVHAR2 = mse_bvhar_v2

) |>

lapply(mean) |>

unlist() |>

sort()

#> VHAR VAR BVHAR1 BVAR BVHAR2

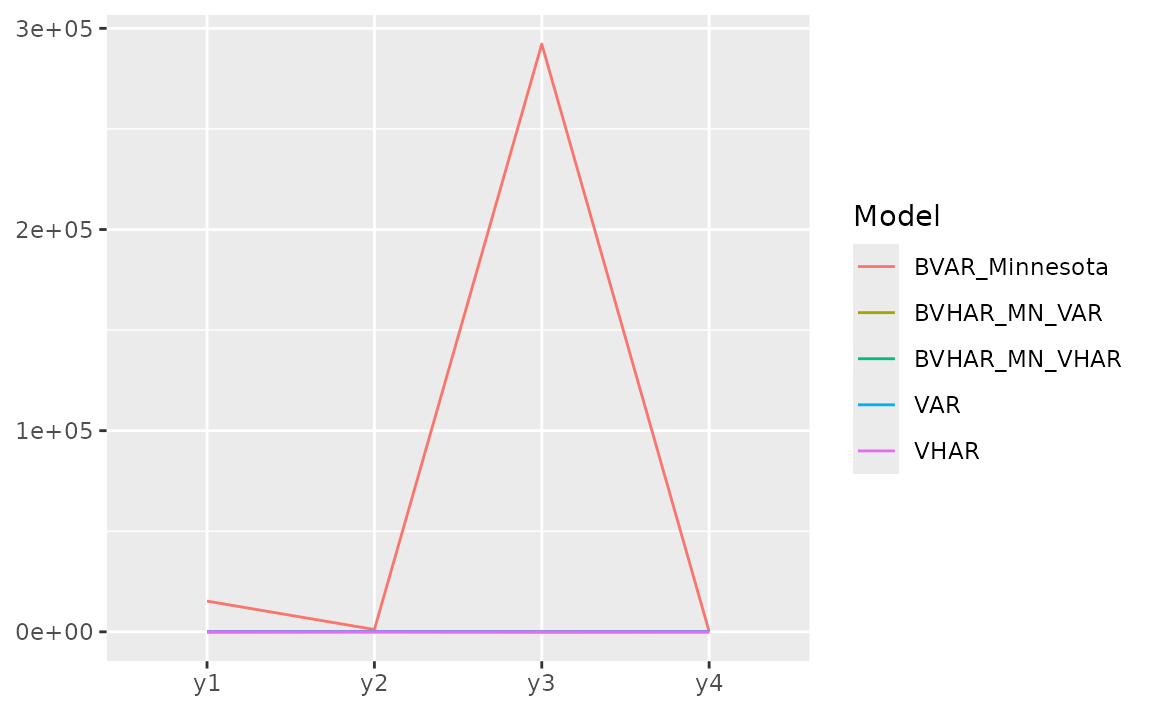

#> 3.36e+00 3.36e+00 4.40e+01 1.55e+13 1.52e+40For each variable, we can see the error with plot.

list(

pred_var,

pred_vhar,

pred_bvar,

pred_bvhar_v1,

pred_bvhar_v2

) |>

gg_loss(y = y_test, "mse")

#> Warning: `label` cannot be a <ggplot2::element_blank> object.

#> `label` cannot be a <ggplot2::element_blank> object.

Relative MAPE (MAPE), benchmark model: VAR

Out-of-Sample Forecasting

In time series research, out-of-sample forecasting plays a key role. So, we provide out-of-sample forecasting function based on

- Rolling window:

forecast_roll(object, n_ahead, y_test) - Expanding window:

forecast_expand(object, n_ahead, y_test)

Rolling windows

forecast_roll(object, n_ahead, y_test) conducts h >=

1 step rolling windows forecasting.

It fixes window size and moves the window. The window is the training set. In this package, we set window size = original input data.

Iterating the step

- The model is fitted in the training set.

- With the fitted model, researcher should forecast the next h >= 1

step ahead. The longest forecast horizon is

num_test - h + 1. - After this window, move the window and do the same process.

- Get forecasted values until possible (longest forecast horizon).

5-step out-of-sample:

(var_roll <- forecast_roll(model_var, 5, y_test))

#> y1 y2 y3 y4

#> [1,] 19.7 26.0 10.58 32.3

#> [2,] 18.6 25.1 10.08 31.3

#> [3,] 18.4 24.3 9.84 30.9

#> [4,] 18.1 24.5 9.68 30.5

#> [5,] 19.2 25.5 9.99 31.3

#> [6,] 19.2 26.8 10.23 30.9

#> [7,] 19.3 27.3 10.13 30.1

#> [8,] 20.4 28.2 10.38 29.5

#> [9,] 20.1 28.3 10.30 30.3

#> [10,] 19.9 27.0 10.02 29.3

#> [11,] 19.6 26.7 10.05 29.5

#> [12,] 19.6 25.5 9.67 30.3

#> [13,] 20.8 26.6 10.14 29.8

#> [14,] 20.8 26.2 10.06 28.9

#> [15,] 20.6 25.6 9.91 28.9

#> [16,] 19.8 26.6 10.22 28.4Denote that the nrow is longest forecast horizon.

class(var_roll)

#> [1] "predbvhar_roll" "bvharcv"

names(var_roll)

#> [1] "process" "forecast" "eval_id" "y"To apply the same evaluation methods, a class named

bvharcv has been defined. You can use the functions

above.

vhar_roll <- forecast_roll(model_vhar, 5, y_test)

bvar_roll <- forecast_roll(model_bvar, 5, y_test)

bvhar_roll_v1 <- forecast_roll(model_bvhar_v1, 5, y_test)

bvhar_roll_v2 <- forecast_roll(model_bvhar_v2, 5, y_test)Relative MAPE, benchmark model: VAR

Expanding Windows

forecast_expand(object, n_ahead, y_test) conducts h

>= 1 step expanding window forecasting.

Different with rolling windows, expanding windows method fixes the starting point. The other is same.

(var_expand <- forecast_expand(model_var, 5, y_test))

#> y1 y2 y3 y4

#> [1,] 19.7 26.0 10.58 32.3

#> [2,] 18.6 25.1 10.09 31.3

#> [3,] 18.4 24.4 9.84 30.9

#> [4,] 18.1 24.5 9.68 30.4

#> [5,] 19.2 25.6 9.99 31.3

#> [6,] 19.2 26.8 10.25 30.9

#> [7,] 19.3 27.3 10.15 30.2

#> [8,] 20.4 28.2 10.39 29.6

#> [9,] 20.0 28.3 10.31 30.3

#> [10,] 19.9 27.0 10.02 29.3

#> [11,] 19.6 26.7 10.06 29.5

#> [12,] 19.5 25.5 9.67 30.2

#> [13,] 20.7 26.6 10.13 29.8

#> [14,] 20.7 26.2 10.06 28.9

#> [15,] 20.5 25.6 9.92 28.9

#> [16,] 19.8 26.6 10.22 28.4The class is bvharcv.

class(var_expand)

#> [1] "predbvhar_expand" "bvharcv"

names(var_expand)

#> [1] "process" "forecast" "eval_id" "y"

vhar_expand <- forecast_expand(model_vhar, 5, y_test)

bvar_expand <- forecast_expand(model_bvar, 5, y_test)

bvhar_expand_v1 <- forecast_expand(model_bvhar_v1, 5, y_test)

bvhar_expand_v2 <- forecast_expand(model_bvhar_v2, 5, y_test)Relative MAPE, benchmark model: VAR